What Is Incrementality Testing?

What is Incrementality testing?

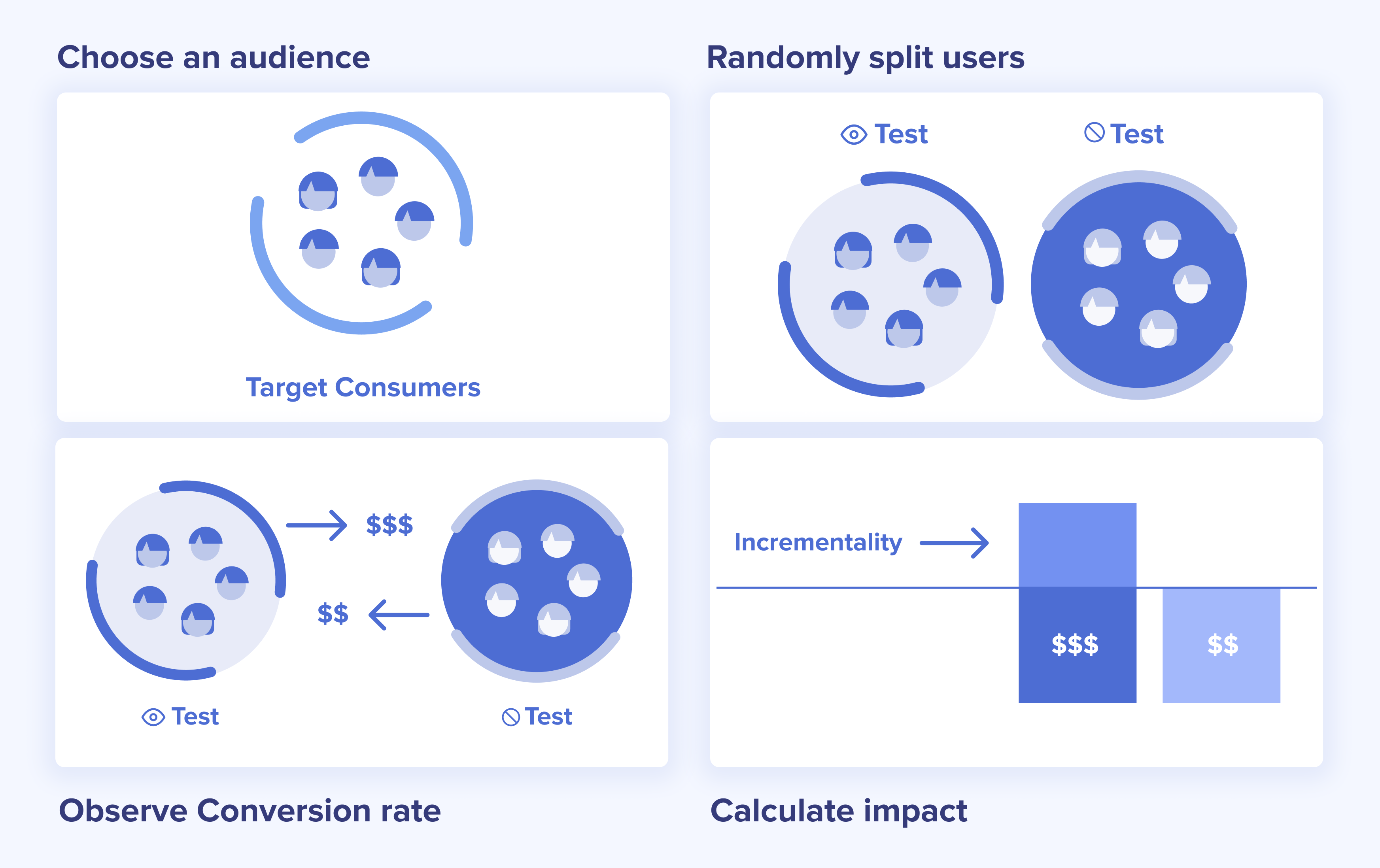

Incrementality testing in digital marketing assesses the added value of a specific marketing strategy by comparing a group exposed to the strategy (test group) to a group that isn’t (control group). It aims to answer the question, “Did this marketing activity genuinely yield additional results?”

How to measure incrementality

If you wondering how to calculate incrementality, there are multiple options:

-

In-house incrementality test: You can conduct an Incrementality test in-house. Start by segmenting your audience, expose one segment to the marketing strategy, while keeping the other unexposed. Then, analyse the performance difference between the two groups.

-

Use incrementality testing services: Alternatively, you can opt for incrementality testing services offered by providers like SegmentStream, Measured, INCRMNTAL, and others. Costs for such services can range from a few thousand pounds to tens of thousands, depending on the complexity of your campaign.

Another way to measure incrementality - attribution by SegmentStream

SegmentStream offers a unique approach to measuring the incremental impact of performance marketing campaigns. It analyses hundreds of data points, including impressions, clicks, CRM details, and user behaviour on your website. Aiming to provide a deeper understanding of each channel and campaign’s actual influence on your revenue.

See a real-world example of how it works in this video.

FAQ

How are incrementality experiments different from a/b experiments?

A/B tests change something to see what works best. Incrementality tests check if a strategy brings any extra benefits compared to doing nothing.

What is incrementality in marketing?

Incrementality is the measure of the additional value or results (like conversions or sales) that a particular marketing effort or strategy brings, beyond what would have occurred without it. In other words, it helps determine if a campaign genuinely made a difference or if the results would have happened anyway.

How to measure incrementality?

- In-house testing: Segment your audience, expose one group to the strategy, and compare results with a group that remains unexposed.

- Incrementality testing providers: Consider services like SegmentStream, Measured, and INCRMNTAL for professional incrementality testing.

- SegmentStream’s AI attribution: SegmentStream offers a data-rich approach, analysing impressions, clicks, CRM data, and user behaviour for deep insights.

What is incremental attribution?

Incremental Attribution is an approach used in digital marketing to measure the true impact of specific advertising efforts on conversions or revenue. One such approach is offered by SegmentStream. This attribution solution helps businesses identify which channels genuinely contribute to their revenue by analysing hundreds of data points: from impressions, clicks, and CRM details to user behaviour on your website.

What’s a real-world example of incrementality testing?

A brand found a 15% sales increase among users who saw their new Instagram ads compared to those who didn’t, indicating the ads’ direct impact.

Optimal marketing

Achieve the most optimal marketing mix with SegmentStream

Talk to expert